You might notice how easy it is to find a familiar face in a sea of Facebook posts. It’s like your brain’s own superpower: pattern recognition. But have you ever stopped to think about the tech behind it? If you’ve ever wondered what is pattern recognition, you’re in the right place.

In this article, we’re diving into the cool world of pattern recognition tech, breaking it down in plain English. But before we geek out, let’s talk real talk: why does this stuff matter for business? Without a clear goal, it’s just fancy tech with no purpose.

So, you in? Let’s get into it!

What is Pattern Recognition?

As humans, we’re wired by evolution to recognize patterns and connect them with our stored memories. Broadly speaking, pattern recognition involves the ability to remember and recall patterns after repeated exposure.

In the realm of machine learning, this recognition involves matching database information with incoming data. Essentially, models rely on familiar patterns to identify similarities effectively.

While there are subtle overlaps, such as image classification in pattern recognition, it’s important to note that computer vision and pattern recognition are distinct fields. Pattern recognition deals with various types of data and focuses on automated pattern discovery.

Pattern recognition is the task of assigning a class to an observation based on patterns extracted from data.

On the other hand, computer vision primarily revolves around tasks like image processing, object detection, classification, and segmentation, although it doesn’t solely rely on pattern recognition.

Types of Pattern Recognitions

Selecting the right algorithms for pattern recognition can be quite a challenge. Let’s touch on six common ones:

1. Statistical

This approach relies heavily on probability, using statistical techniques to learn from examples. By analyzing observations, the model formulates rules that can be applied to future data.

2. Structural

When patterns are complex, statistical methods might not cut it. Structural recognition takes a hierarchical approach, breaking patterns down into subclasses. It’s great for tasks like image and shape analysis, where relationships between elements are intricate.

3. Neural Network

Using artificial neural networks, this method is more flexible than traditional algorithms. Neural networks, inspired by biological concepts, excel in pattern classification. Feed-forward networks, in particular, are effective for the recognition, and learning by providing feedback to input patterns.

4. Template Matching

Perfect for comparing entities of the same type, template matching matches a target pattern with a stored template. It assesses the similarity between curves, shapes, and more. However, it can be inflexible and requires many templates.

5. Fuzzy-Based

In the real world, uncertainty is common, just like in our cognitive system. Fuzzy-based algorithms, which use many-valued logic, accommodate this uncertainty. They’re highly applicable in recognizing objects, both in the physical and digital realms.

6. Hybrid

Hybrid models blend different algorithms to leverage their respective strengths. They employ multiple classifiers trained on feature spaces, culminating in a decision based on the collective accuracy of these classifiers.

Pattern Recognition in Machine Learning

As a fundamental component of computer vision, pattern recognition seeks to replicate the capabilities of the human brain. Consider this: the ability to make predictions on unseen data is achievable because models can recognize recurring patterns, regardless of the data format—whether it’s an image, video, text, or audio.

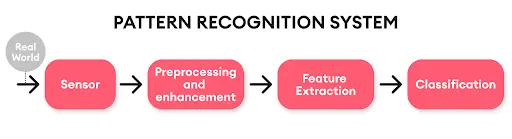

Though inherently intricate, pattern recognition involves analyzing input data, extracting patterns, and comparing them with stored data. This process can be divided into two phases: explorative, where algorithms uncover patterns, and descriptive, where patterns are grouped and attributed to the initial data. Breaking it down further, pattern recognition in machine learning follows this path:

1. Data Collection

High-quality datasets are essential for accurate recognition. Open-source datasets can save time, but ensuring data quality is paramount. In cases where manual collection is impractical, synthetic datasets may be generated.

2. Pre-processing

This step involves correcting impurities to produce comprehensive data sets and improve prediction accuracy. Techniques like smoothing and normalization address variations in lighting and intensity, ensuring meaningful data for models.

3. Feature Extraction

Input data is transformed into a feature vector, a reduced representation of features, to address high dimensionality. Relevant features are selected to ensure insensitivity to distortions or manipulations and maximize accuracy potential.

4. Classification

Extracted features are compared with similar patterns, associating each with a relevant class. Supervised learning involves prior knowledge of pattern categories, while unsupervised learning relies on inherent data patterns. Post-processing follows to further guide the system based on the output.

Pattern Recognition Applications and Real Life Examples

With a plethora of algorithms available, the expectations for pattern recognition applications naturally rise. The examples are boundless. Here are several areas where pattern recognition finds its footing:

1. NLP

Recognition algorithms extract insights from data patterns for tasks like plagiarism detection, text generation, translation, and grammar correction.

2. Fingerprint Scanning

Biometric scanning is ubiquitous nowadays, found in smartphones and laptops for added security. These devices analyze fingerprint features through pattern analysis.

3. Seismic Activity Analysis

Scientists analyze seismic records to understand how earthquakes impact the earth’s crust. By recognizing recurring patterns, they can build models to mitigate seismic effects.

4. Audio and Voice Recognition

Speech-to-text converters and personal assistants like Siri and Alexa rely on these pattern to understand and interpret audio signals, encoding words and phrases for meaningful responses.

5. Computer Vision

From biological to medical imaging, these recognition plays a crucial role in computer vision applications. It aids in tasks like detecting damaged leaves, infected cells, and more.

Conclusion

In conclusion, pattern recognition is the backbone of various technologies, from facial recognition to medical diagnosis, leveraging our innate ability to identify recurring patterns. Understanding its types, including classification pattern recognition, along with its diverse applications and challenges, highlights its growing importance in modern society.

With AI driving advancements in pattern recognition and application, organizations like Qodenext are well positioned to harness these capabilities for innovative solutions. By unlocking the full potential of pattern recognition, we pave the way for enhanced efficiency, informed decision-making, and transformative breakthroughs across industries.

FAQs: What is Pattern Recognition: How it Works, Types & Examples

1. What are the main challenges in pattern recognition?

The main challenges include variability in data, overfitting, high dimensionality, computational complexity, and the need for labelled data for supervised learning.

2. Does pattern recognition use memory?

Yes, It relies on memory to store and retrieve patterns, facilitating the identification of similarities and making predictions based on learned patterns.

3. What are the benefits of pattern recognition?

Benefits include automation of tasks, improved decision-making, enhanced efficiency, better understanding of data, and applications in diverse fields like medicine, finance, and technology.

4. How to use AI in pattern recognition?

AI is used through algorithms like neural networks, deep learning, and machine learning techniques to analyze data, identify patterns, and make predictions or classifications based on learned patterns.

5. How is classification used in pattern recognition?

Classification is the decision-making stage of pattern recognition. Once relevant features are extracted from data, classification assigns each input to a predefined category. For example, in handwriting recognition, the system analyzes shapes and classifies them as letters or numbers. Supervised classification uses labeled data, while unsupervised classification relies on natural groupings within the dataset.

6. What are common challenges in pattern recognition?

Pattern recognition systems face challenges such as noisy or incomplete data, high dimensionality, and variability in patterns due to distortions or environmental conditions. Another key challenge is the need for large, high-quality datasets to train accurate models. Balancing speed and accuracy in real-time applications is also a major concern for researchers and developers.

7. What are the main applications of pattern recognition?

Pattern recognition has a wide range of applications, from everyday technologies to specialized industries. Examples include speech recognition in virtual assistants, facial recognition in security systems, medical imaging for diagnostics, financial fraud detection, and quality inspection in manufacturing. These applications demonstrate its versatility across data formats—image, text, audio, and video.

8. Which industries benefit most from pattern recognition?

Industries such as healthcare, finance, automotive, retail, and security make extensive use of pattern recognition. In healthcare, it supports early disease detection. In finance, it helps identify fraudulent transactions. The automotive sector relies on it for self-driving systems, while retail uses it for customer behavior analysis. Security applications include surveillance and identity verification.

9. What is the difference between supervised and unsupervised pattern recognition?

Supervised pattern recognition uses labeled datasets, where models learn to classify input data into known categories. Unsupervised recognition, on the other hand, does not rely on predefined labels; instead, it discovers hidden patterns or clusters within the data. Both approaches are valuable depending on whether prior knowledge of categories is available.

10. How does pattern recognition relate to machine learning?

Pattern recognition is considered a core task within machine learning. Machine learning provides the algorithms and models—such as neural networks, decision trees, and support vector machines—that make recognition possible. The goal is to enable systems to automatically identify, classify, and learn from data patterns, improving performance over time without explicit programming.